Hermes Agent: A Revolution in AI Agents… or Simply the Next Evolution?

The rise of AI agents is gradually transforming how language models are used in production. More recently, personal agents such as OpenClaw have redefined the personal use cases of agentic AI. Hermes Agent positions itself as an ambitious evolution of this kind of agent. Developed by Nous Research, it promises to retain what it learns over time, structure its experience, and autonomously develop its own skills.

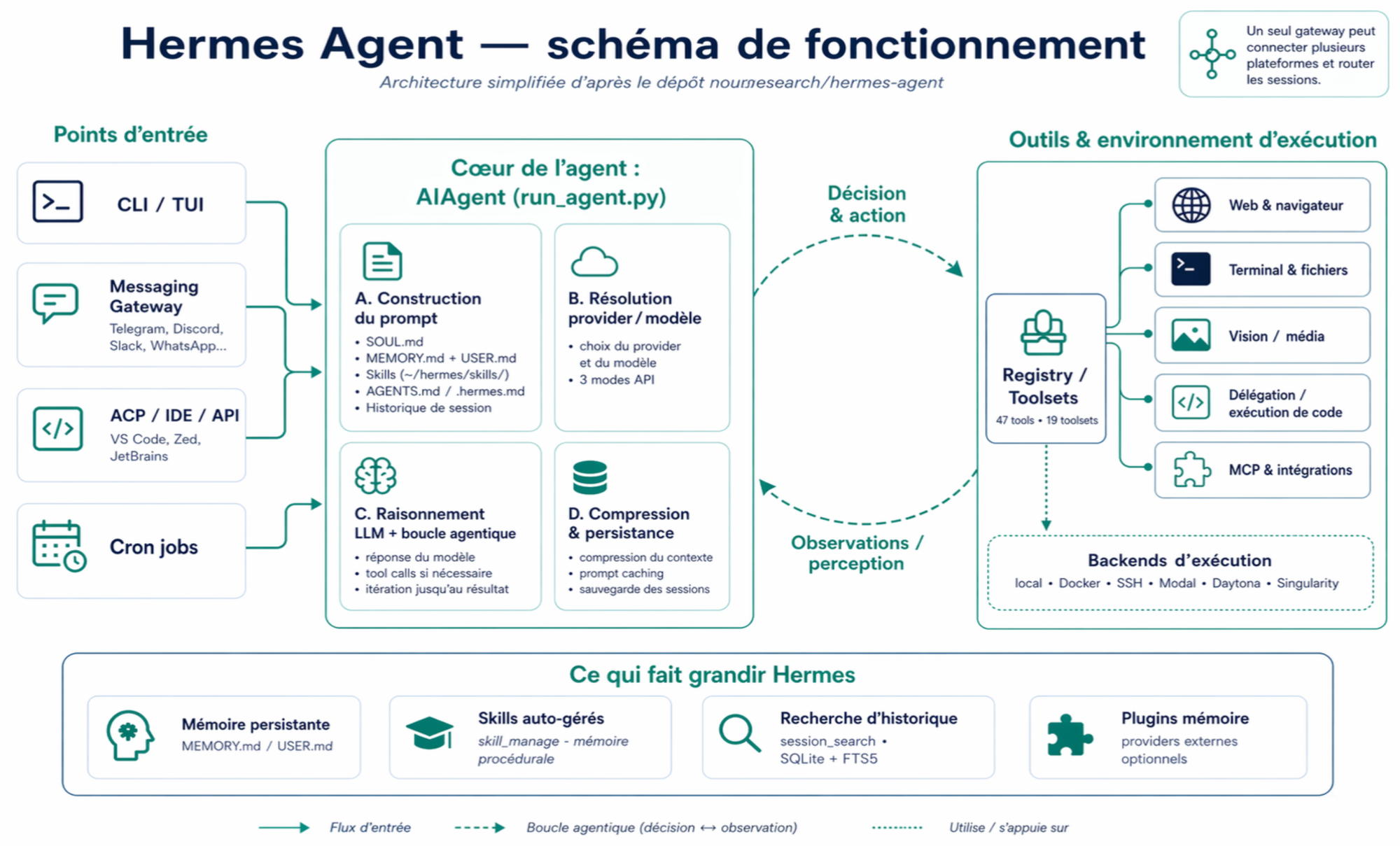

An Architecture Built Around Persistence

Unlike traditional assistants, Hermes Agent does not treat each user interaction as independent. It introduces a persistent memory layer in which skills are stored, meaning procedures generated from previously completed tasks.

When a task is executed, the agent does not simply produce an answer. It also formalizes the process as a reusable document. That document can then be invoked in similar contexts, enabling a gradual improvement in performance.

The mechanism relies on a relatively simple loop in principle: solve, formalize, store, and reuse. In practice, however, it fundamentally changes the nature of the system. The agent progressively strengthens itself on the specific tasks it has already practiced.

From Orchestration to the Accumulation of Skills

Hermes stands apart from more traditional agent frameworks, such as OpenClaw, by integrating a stronger form of memory persistence.

In a system like OpenClaw, the main value lies in the ability to orchestrate API calls, chain actions, and structure a workflow. Memory is generally secondary, if not absent.

Hermes, while still capable of these actions, shifts the center of gravity toward the accumulation of skills. The system is not only trying to complete a task, but to build a base of procedural knowledge that it can later draw upon.

This distinction is essential. It marks a shift from an execution-oriented model to a learning-oriented one.

Still-Emerging Use Cases

In practice, Hermes Agent is most relevant in contexts where repetition and structure play a central role. Software development is a natural first domain. The agent can capitalize on recurring patterns, generate documentation, and automate certain maintenance tasks.

Knowledge management use cases also appear promising. By accumulating summaries, analyses, and procedures, Hermes can gradually become a structured access point to the information of a project or an organization.

Finally, long-running automation scenarios, including scheduled tasks and continuous workflows, highlight the value of a persistent agent. In such cases, the ability to retain state and reuse past solutions becomes a decisive advantage.

The Limits of Context-Based Learning

The promise of an agent that “learns” should, however, be qualified. In Hermes, learning does not rely on updating the model’s weights, but on the accumulation of documents and procedures.

In other words, the system does not change its internal representation of the world. It simply enriches the context it can access. This distinction has important implications. It limits the depth of learning and makes the system dependent on the quality of the traces it produces itself.

This approach may also lead to uncontrolled memory growth. As skills accumulate, governing them becomes more complex. Selecting the right procedures, detecting redundancies, and managing contradictions become central challenges.

Moreover, performance remains tightly tied to the underlying language model. Hermes does not compensate for the model’s limitations; it merely organizes them.

Risk Surface and Security

The introduction of persistence and autonomy also raises critical security questions. An agent capable of executing code, accessing files, or interacting with external services inherently has a very broad — perhaps too broad — scope of action.

In this context, the issue is no longer only the quality of the agent’s responses, but also control. Who validates the agent’s actions? How are its decisions traced? How is its scope of intervention constrained? These questions are fundamental in production, especially in sensitive sectors such as insurance or healthcare.

Memory itself can become a potential vector of vulnerability. Incorrect or malicious information may be stored and later reused, affecting the agent’s future decisions. This dynamic introduces risks similar to those observed in long-context systems, but amplified by persistence.

The implementation of sandboxing, permission controls, and auditing mechanisms therefore appears essential for any real-world deployment.

A Gradual Transformation of Use Cases

As it stands, Hermes Agent does not constitute a strict technological break. The components it relies on — language models, external memory, orchestration — are already well known.

What it does propose, however, is a recomposition of these elements around a simple idea: an agent should not have to start from scratch at every interaction.

This shift in perspective, while conceptually modest, has important implications. It brings AI systems closer to a mode of operation more akin to that of a collaborator: able to remember, structure its experience, and improve over time.

The central question is therefore no longer only what an agent can do at a given moment, but what it becomes over time.

That is where the real promise of this new generation of systems lies.